Robotic Manipulation

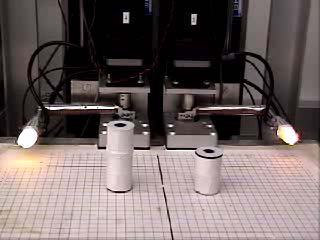

The HRL Manipulator's two "fingers."

Apparatus

The HRL Manipulator consists of two two-link "fingers," each with three degrees of freedom. Each of the finger's links rotates in the plane parallel to the worksurface and the entire finger can be moved perpendicular to the worksurface. The actuation is provided by six servomotors that communicate with a computer via the RS-232 serial protocol. In addition, there is a camera mounted above the worksurface used to track the movements of the manipulator and the objects which are being manipulated.

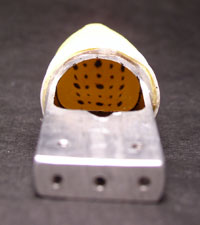

The camera's view of the fingertip membrane dots.

Each finger has a tactile sensor attached to its end which consist of a compliant fingertip made from a latex membrane filled with silicone. When the fingertip deforms, a set of dots drawn on the inside of the membrane moves. The new location of the dots is captured by a small camera mounted inside the fingertip. The image taken by the camera is processed to determine the location of the dots on the image plane. We use these 2-dimensional coordinates along with a physical model of the fingertip to determine the dots' locations in 3-dimensional space. This provides information about the shape of the fingertips which can be used as feedback in our manipulation algorithms.

Block Stacking

One task which is currently being investigated on this system is block stacking. We would like to be able to place two blocks of unknown height anywhere in the manipulator's workspace and have it stack one on top of another. This requires the manipulator to identify the locations of both blocks, send the fingers to one of the blocks, grasp the block securely, lift it over the other block and place it on top of that block --- all without causing any collisions or dropping any blocks. This task makes use of the overhead camera to locate the blocks and the tactile sensors to ensure a secure grasp and detect block placement. It also requires accurate path planning and execution to avoid any collisions.

Motion Description

A fundamental question that is addressed in this research is how best to describe motions. The task of programming a robot can often be time-consuming and specific to the hardware that is being used. In this case, motions are described in terms of specific commands that control the robot's hardware and are not portable to other robots. It would be convenient to have a machine-independent language for describing motions that a robot performs.

Programming convenience is not our only goal, however. We desire to establish a mathematical framework for describing motion in general. Our strategy involves combining motion segments or "atoms" composed of open-loop and closed-loop control laws into motion programs which are executed by the robot. Such a framework would be useful in the formulation of problems involving the amount of information necessary to describe a motion. These problems include the minimization of data required to control a robot (e.g. in space robotics) and the design of robots for tasks whose motions are required to be described with a minimum of instructions.

Publications

Roger W. Brockett, "Minimizing Attention in a Motion Control Context," Proc. 42nd IEEE Conf. Dec. and Control, Maui, HI, pp. 3349-3352, Dec. 2003.

[pdf] [bibtex]

Dimitrios Hristu-Varsakelis and Roger W. Brockett, "Experimenting with Hybrid Control," IEEE Control Systems Magazine, Vol. 22, Issue 1, February 2002, pp. 82-95.

[pdf] [bibtex]

Nicola Ferrier and Roger W. Brockett, "Reconstructing the Shape of a Deformable Membrane using Image Data," International Journal of Robotics Research, Vol. 19, No. 9, September 2000, pp. 795-816.

[pdf] [bibtex]

Roger W. Brockett, "On the Computer Control of Movement," Proc. IEEE Conf. on Robotics and Automation, Philadelphia, PA, pp. 534-540, 1988.

[pdf] [bibtex]